Artificial intelligence company OpenAI has announced significant changes to a collaboration agreement with the U.S. Department of Defense, following a wave of criticism from employees, ethics experts, and civil society groups. The deal, initially revealed earlier this month, involved the use of OpenAI's tools for cybersecurity and data analysis tasks at the Pentagon. The news sparked intense debate about the ethical boundaries of AI and the role of tech companies in military affairs, leading the company to revise the terms of the collaboration.

The context of this controversy dates back to OpenAI's founding principles, which originally committed to developing artificial general intelligence (AGI) safely and beneficially for humanity, with explicit safeguards against uses that could cause harm or enable 'autonomous weaponization.' The initial agreement with the U.S. military, although limited to non-lethal areas such as cyber defense and logistical optimization, was perceived by many as a dangerous departure from those principles. Critics argued that even 'non-lethal' uses in a military context could normalize the relationship between AI and warfare, opening the door to more controversial applications in the future.

Relevant data indicates that the U.S. Department of Defense's spending on AI and machine learning has grown exponentially in recent years, with a requested budget of billions of dollars for 2024. In this ecosystem, OpenAI, with its advanced language models like GPT-4, represents a strategic asset. However, the company has maintained a usage policy that explicitly prohibits activities with a high risk of physical harm, including weapon development. The ambiguity of the military agreement put this policy under scrutiny.

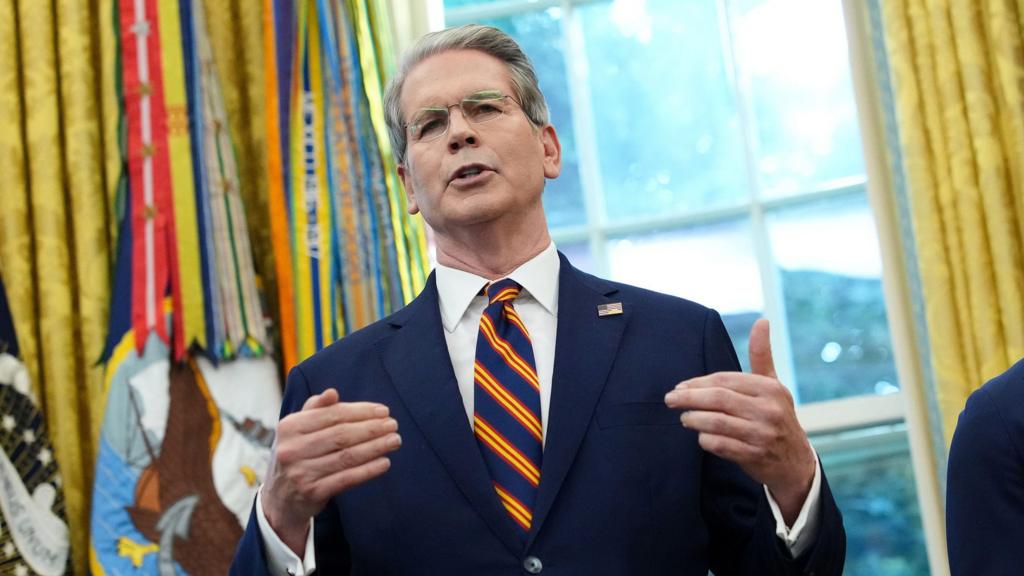

In statements to the press, an OpenAI spokesperson said: 'We have listened to the concerns raised by our community and external partners. While we believe in AI's potential to enhance national security in areas such as defense against cyberattacks, we must ensure that any collaboration unequivocally and transparently aligns with our commitment to safety and benefit. Therefore, we have revised the agreement to include stricter limits, external audits, and a termination clause if any deviation from our ethical standards is identified.'

The impact of this decision is multifaceted. On one hand, it temporarily quells internal and external dissent, preserving OpenAI's image as an ethically conscious company. On the other hand, it raises broader questions about the feasibility of maintaining absolute barriers in a sector where dual-use (civilian and military) technology is the norm. Competitors like Google and Microsoft have also faced similar dilemmas with military projects, such as Google's controversial Project Maven, suggesting this will not be the last confrontation between the AI industry and its conscience.

In conclusion, OpenAI's reconsideration of its collaboration with the U.S. military highlights the ongoing tension between technological innovation, commercial imperatives, and ethical responsibility. While the modification of the agreement represents a victory for advocates of responsible AI, it also reveals the constant pressure exerted by major government contracts on tech startups. The episode serves as a crucial reminder that, in the age of AI, governance and oversight frameworks must evolve at the same pace as technical capabilities, requiring continuous and transparent dialogue between companies, governments, and civil society to navigate this uncharted territory.